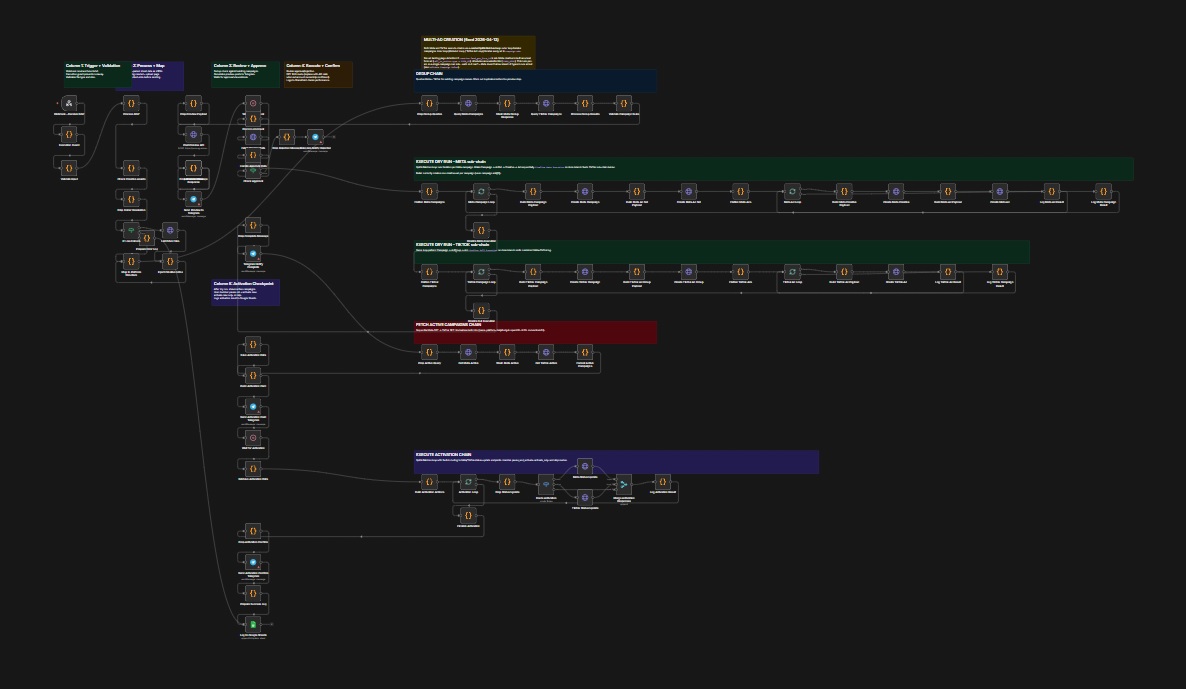

AI-powered support, shared between marketing and customer service.

The chatbot replaced a USD5,000/month vendor contract. The more significant change is operational: marketing and technical support now share responsibility for keeping the knowledge base current. Marketing owns product announcements, campaign context, and pricing updates; support owns integration guidance, error resolution, and account queries. Two teams now maintain an AI system together as a default part of their workflow.

01

The situation.

A premium services company operating in Singapore was paying USD5,000 per month for a third-party chatbot vendor. The solution handled basic customer queries with limited customisation and no visibility into conversation quality or volume.

02

The prototype.

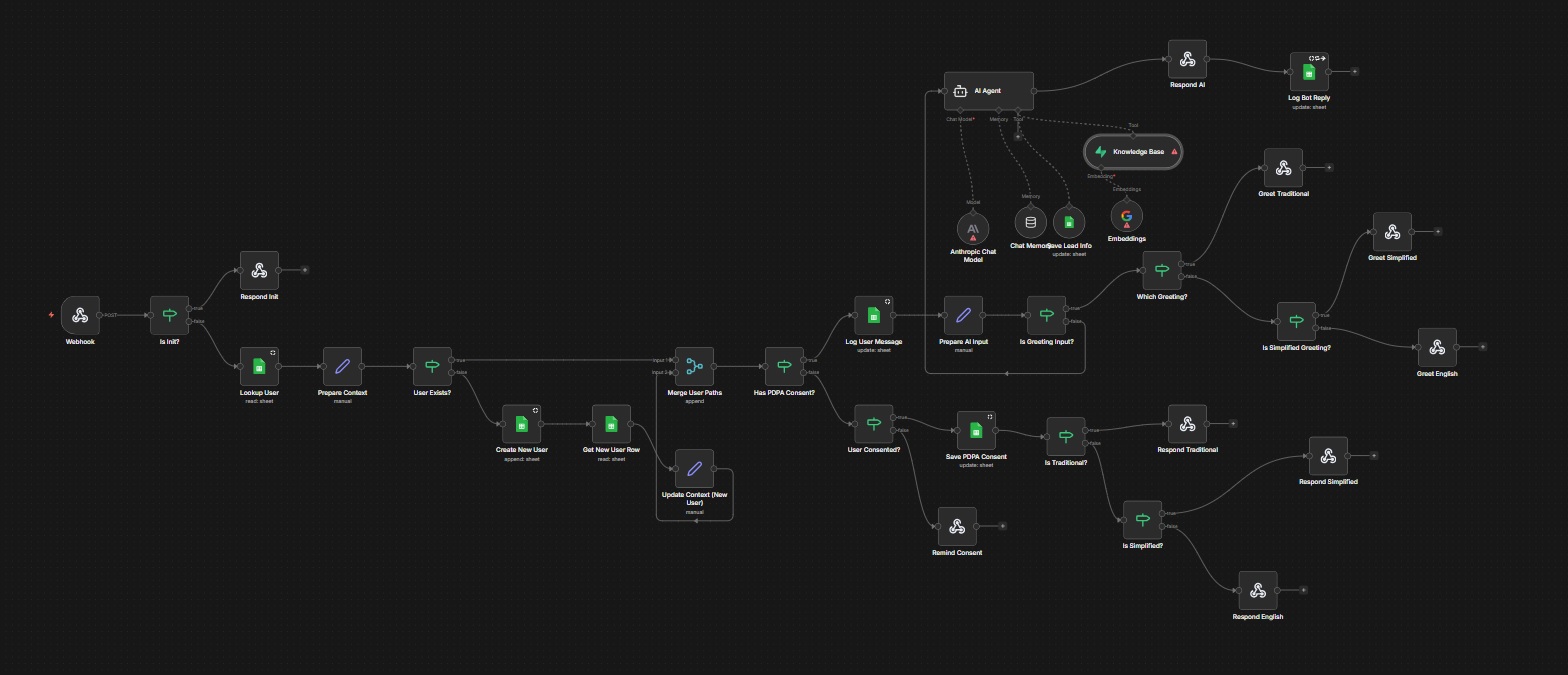

A RAG-based replacement was built in under a day using n8n as the orchestration layer, a Supabase vector store for knowledge retrieval, and the Claude API for response generation. Language detection routed queries to the correct response path for English and Chinese speakers.

03

The result.

The prototype matched and exceeded the vendor on response quality, while adding conversation transcript logging and a dashboard API for operations review. The vendor contract was terminated. Annual saving: approximately SGD81,000.

Note

Architecture lineage.

Built on the pilot n8n instance. The RAG retrieval pattern and modular webhook design established here informed all subsequent production systems.

SGD81,000/yr

vendor contract terminated following prototype validation

Technical highlights

n8n

Supabase pgvector

Claude API

RAG

Webhook

- RAG architecture with Supabase pgvector for semantic knowledge retrieval

- Language detection gateway routing queries for English and Chinese

- Conversation transcript logging with timestamp and session tracking

- Dashboard API endpoint for operations team transcript review